In data centers, about 40 per cent of the total electrical energy is consumed for cooling the IT equipment. Cooling costs are, thus, one of the major contributors to the total electricity bill of large data centers. Particularly, two major factors affecting data center cooling energy consumption, namely air flow management and data center location selection. Two cooling systems, computer room air conditioning (CRAC) cooling system and airside economiser (ASE), have been used for cooling purpose of large data centers. It is found that the cooling efficiency and operating costs vary significantly with different climate conditions, energy prices and cooling technologies. As climate condition is the major factor which affects the airside economiser, employing the airside economiser in the cold climate yields much lower energy consumption and operation costs.

The worldwide energy consumption of data centers has increased dramatically, now accounting for about 1.3 per cent of the world’s electricity usage. The data centers have evolved significantly during the past decades by adopting more efficient technologies and practices in data center infrastructure management (DCIM). It is estimated that the market will grow by about 5 per cent yearly to reach USD 152 billion in 2016. Meanwhile, there is a trend to build mega data centers with capacities over 40 MW. Energy efficiency is an important issue in the data centers for minimising environmental impact, lowering costs of energy consumption and optimising data center operation performance.

A modern data center has a large room with many rows of racks filled with a huge number of servers and other IT equipment used for processing as shown in Figure 1, storing and transmitting digital information. An amount of heat is generated by these thousands of servers and another IT equipment. To maintain reliability of the servers and other IT equipment in the data center, it is of importance to maintain proper temperature and humidity conditions. Figure 2 reports the results of an investigation of 10 random data centers. It reveals the representative power consumption distribution and the variation, showing a spread between 30 per cent and 55 per cent of the total energy consumed by cooling data center IT equipment. Cooling and ventilation systems consume on average about 40 per cent of the total energy used. Therefore, how to reduce power consumption, power costs and increase cooling efficiency and maximise availability must be taken into account before building up a data center.

Figure 1. Cooling of modern data centre

Figure 2. Data center power consumption distribution and variation.

IT devices always show high necessities in working conditions (Table 1), especially, the indoor temperature (22±2C) and humidity (50±5%) control. Therefore, the air conditioning system is of great significance in space cooling for such data centers, considering the huge and consecutive heat emissions Figure 3 shows the typical refrigeration system for data centers. The electrical chiller is used to produce low temperature water in its evaporator and then the chilled water is delivered to the terminal air handling units to take away the emission heat from racks. On the other hand, the condensation heat of the chiller is exhausted to the ambient through the cooling tower.

Figure 3. Typical refrigeration system in data centers.

Table – 1. Summary of data center usual thermal loads and temperature limits.

Data Center Cooling Systems

Air cooled systems

The terminal cooling equipment should provide air with the right cooling capacity and a properly distribution. There are several parameters that could influence the cooling efficiency, such as ceiling height, where hot air stratification may occur, raised floor or dropped ceiling height (Figure 4), which is important for achieving a correct air distribution between the IT equipment, and airflow direction in the room as shown in Figure 5.

Figure 4. Cold-aisle containment system (CACS) deployed with a room-based cooling approach

Two major air distribution problems have been identified in data center, by-pass air and recirculation air. Re-circulation air occurs when airflow to the equipment is not sufficient and part of the hot air is re-circulated, which results in a considerable difference between inlet temperature at the bottom and the top of the rack can occur. By-pass of the cold air occurs due to a high flow rate or leaks through the cold air path. In this case, part of the cold air stream skips directly from the cold air supply to the exhaust air without contributing to the cooling process. This poor air management results in a low cooling efficiency and generates a vicious cycle of rising local temperature. In fact, about one rack in ten works with a temperature above the standard recommendations and the majority of hot spots occur in data centers with light load, indicating that the main cause of hot spots is a poor air management. In order to prevent hot spots, usually the temperature of the cooling system is set below the IT requirements.

Figure 5. Air flow illustration in data center vertical server rack layout.

Liquid cooled systems

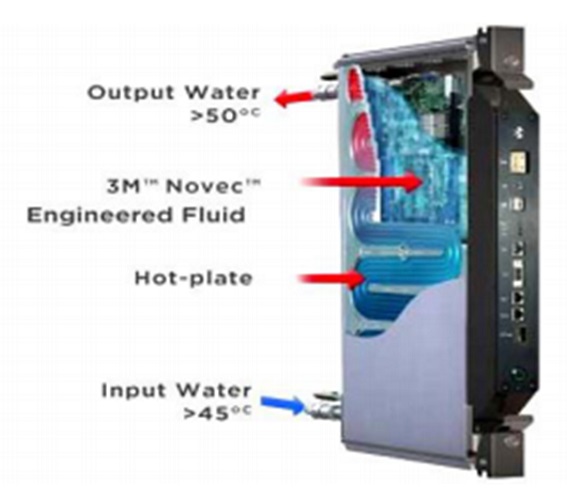

When DCs have a high-power density equipment, air-cooled systems might not be the best solution in terms of efficiency and reliability. Therefore, different cooling technologies should be employed in such cases, like liquid-cooled systems as shown in Figure 6 that are capable of supporting high density power and offer a wide range of advantages. The main advantage is the higher heat transfer capacity per unit, which allows working with lower temperature difference between the CPU and the coolant. Moreover, this solution eliminates two low efficiency steps of air-cooled systems, heat-sink-to-air and air-to-coolant heat transfer. Hence, a decrease in the system thermal resistance and an increase in energy efficiency can be obtained. Higher inlet temperatures can potentially eliminate the need of active equipment for the heat rejection, and also open up the possibility of heat reuse. Liquid-cooled systems can be constructed using micro-channels flow and cold-plate heat exchangers in direct contact with some components such as CPUs and DIMMs.

Liquid cooling technology is the fully immersed direct liquid-cooled system, as proposed in. The server enclosure is sealed and contains a fluoro-organic dielectric coolant in direct contact with electronics, which is used to transfer heat to a water jacket by natural convection. The heat can be then directly transferred from the cabinet to an external loop and eventually released or reused.

Fig. 6. Liquid cooling of data centers.

Future Cooling Strategies

Future DC applications will have higher number of transistors for chip and clock rates, which in turn would lead to a further increase in heat dissipation density. To balance this high heat density several others advanced cooling technologies are being developed, such as fully immersed direct liquid-cooled, micro-channel single-phase flow or micro-channel two-phase flow. The cooling technology with the higher heat removal capacity is by far micro-channel two-phase flow system, which takes advantage of the latent heat of the fluid. The use of the latent heat leads to a greatly increased convection heat transfer coefficient due to nucleate boiling compared to the sensible heat of a single-phase fluid. The two-phase cooling could remove higher heat fluxes while working with smaller mass flow-rates and lower pumping power than a single-phase cooling solution. Moreover, if properly designed, two-phase cooling could provide a more uniform equipment temperature. Coolant temperature can reach 80C increasing the quality of the heat, allowing an easy reuse in several applications. With a CFD simulation, it is found that a flow rate of 0.54 g/s with an entering temperature of 76.5C can be sufficient to cool an 85W CPU. The newly proposed and simulated a two-phase cooling cycle having the relative energy performance as well as energy recovery opportunities. Results showed that the liquid single-phase cycle had a pumping power consumption 5.5 times higher than the HFC134a two-phase cooling cycle.

A further step for improving energy efficiency and reducing energy consumption in data center is the capture and reuse of the waste heat produced by the IT equipment. The implementation of waste heat recovery measures can have a great effect on reducing CO2 emissions. Nevertheless, the main impediment to introduce a WHRU (Waste Heat Recovery Unit) in a data center is the low quality of the heat produced, despite the large quantity.

Another step toward the reduction of CO2 emissions in data center industry is the implementation of renewable energy sources (RES) to cover part of data centers overall energy consumption. The main obstacle is the intermittent nature of RES, whereas data center require energy 24 hours per day every day, which needs to be provided even when green power is not available.

Strategies for Slowing Rate of Heating

Despite the challenges provided by recent data center trends, it is possible to design the cooling system for any facility to allow for long runtimes on emergency power. Depending on the mission of the facility, it may be more practical to maximise runtimes within the limits of the current architecture and, at the same time, plan to ultimately power down IT equipment during an extended outage. There are four strategies to slow the rate of heating.

- Maintain adequate reserve cooling capacity

- Connect cooling equipment to backup power

- Use equipment with shorter restart times

- Use thermal storage to ride out chiller-restart time

Conclusion

With data centers enabling nearly all aspects of modern societies and their ever-increasing demand for energy, we need them to be not only cost efficient but also smart, sustainable and ideally part of fighting climate change.